[ad_1]

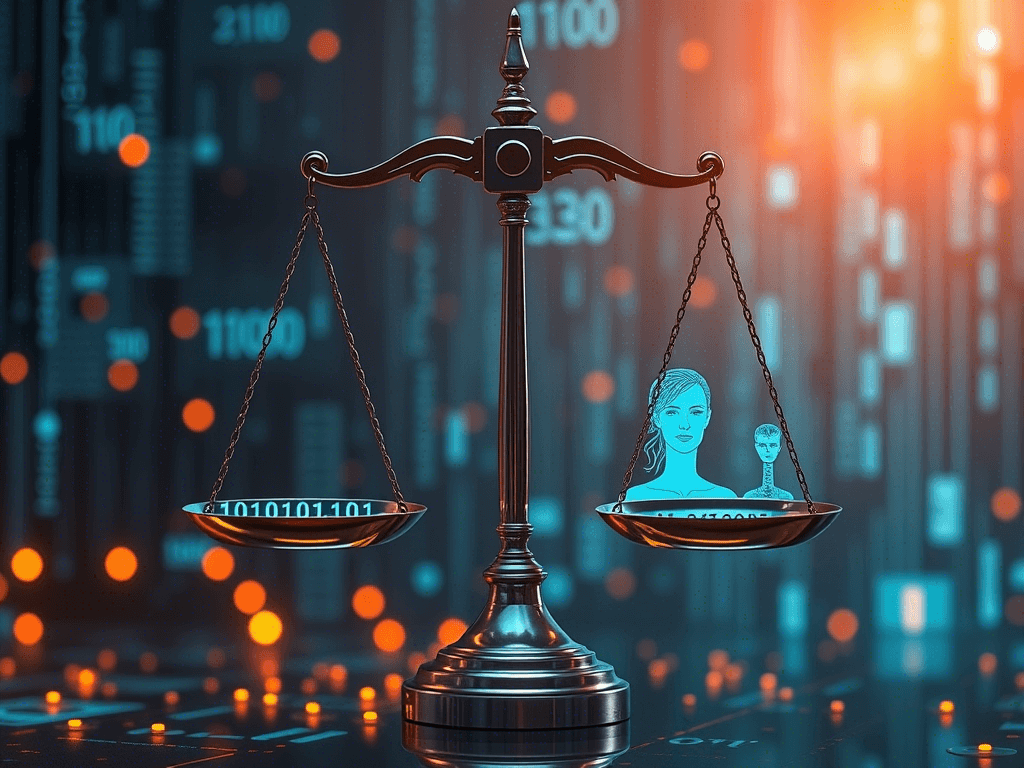

As artificial intelligence continues to permeate various sectors—from healthcare to criminal justice to recruitment—the urgency of addressing ethical concerns surrounding this technology has never been more critical. Among the foremost ethical challenges are bias and accountability in machine learning systems. These concerns underscore the importance of creating AI that is fair, transparent, and responsible.

Understanding Bias in AI

Bias in AI can manifest in numerous ways, often as a result of the data used to train algorithms. Machine learning systems learn from historical data, and if that data contains biases—whether they be based on race, gender, socioeconomic status, or other factors—the AI can inadvertently perpetuate or even amplify those biases. For instance, a facial recognition system trained predominantly on images of light-skinned individuals may perform poorly with darker-skinned populations, leading to inaccuracies in identification.

Such biases not only affect the performance of the AI but also have far-reaching implications. In areas like hiring practices or law enforcement, biased algorithms can lead to systemic inequality, reinforcing stereotypes and potentially harming marginalized communities. Therefore, acknowledging and addressing bias is crucial for developing ethical AI systems.

The Roots of Bias

There are several root causes of bias in AI:

-

- Data Selection: If the dataset used for training an AI model does not represent the diversity of the population it serves, it can lead to biased outcomes. For example, medical datasets that primarily reflect the health aspects of one demographic group may not provide accurate insights for others.

-

- Feature Selection: The features selected for a model can also introduce bias. If certain characteristics are emphasized disproportionately based on historical prejudices, that can skew the results.

-

- Human Influence: Developers’ unconscious biases can infiltrate the design and training phases of AI development, leading to systems that might reflect their creators’ subjective viewpoints.

-

- Feedback Loops: The use of AI in decision-making can create feedback loops. A biased system that denies loans to individuals from specific demographics may create a more significant disparity, which in turn leads to future decisions being based on that reinforced bias.

Accountability in AI

The issue of accountability complicates the ethical landscape of AI even further. When decisions made by an AI system result in negative outcomes, who is to blame? Is it the developers who created the algorithm, the organizations deploying it, or the data suppliers who provided biased data?

Establishing accountability in AI requires a multi-faceted approach:

-

- Clear Regulations: Governments and regulatory bodies must develop frameworks that establish ethical standards for AI development and deployment. These frameworks should include guidelines for data transparency, usage, and the necessity of bias audits.

-

- Transparency in Algorithms: Companies should disclose how their AI systems work, including the datasets used and decision-making processes. Algorithmic transparency allows stakeholders—including consumers and affected communities—to understand and challenge the outcomes of AI systems.

-

- Human Oversight: Implementing robust human oversight for critical decision-making processes is essential. AI should not replace human judgment but rather augment it, ensuring that decisions affecting people’s lives include human ethics and empathy.

-

- Diversity in Development: Diverse teams can help identify biases that might not be apparent to a more homogeneous group of developers. Encouraging diversity in AI research and development teams is vital for creating unbiased solutions.

-

- Regular Audits and Impact Assessments: Organizations should conduct regular audits of their AI systems to evaluate their performance and identify any biases that may have emerged post-deployment. Impact assessments can help determine how AI affects different demographics.

Moving Towards Ethical AI

Addressing bias and accountability in machine learning is an ongoing challenge that requires collaboration among technologists, ethicists, policymakers, and the communities impacted by AI decisions. Public discourse, education, and awareness play significant roles in shaping a future where AI can be harnessed for the greater good rather than reinforce existing inequalities.

As AI technology continues to evolve, so too must our ethical frameworks and governance structures. By prioritizing ethics in AI development, we can work towards a more equitable, accountable future where technology serves all members of society without discrimination or bias. The responsibility lies with all of us—developers, users, and regulators—to ensure that AI uplifts rather than undermines our shared humanity.

[ad_2]